Generally, this lean insight thing is about stealing some of the practices that tech startups use to move fast, learn quickly and build scale.

A lot of people think it’s just about being fast and cheap (they’re half wrong) or that lean has no interest in qualitative depth (they’re utterly wrong).

One of the core principles in lean thinking is the Five Whys – keep asking why until someone punches you or you get to the real answer. It’s big in root cause analysis in lean manufacturing, and also a technique that good qualitative researchers have used for years (minus the punching): laddering.

In this third part of the toolbox series, I want to show how modern tools can help build those rich insights: often faster, sometimes cheaper – and in many cases delivering ‘qualitative at scale’ in a way that has only recently been possible.

Here’s a selection of approaches and platforms that help address ‘Five Why’ type challenges.

Customer closeness, usability research and depth interviews using remote video

As we get more hard data with bigger numbers and more decimal points, we all feel a stronger need for individual human stories.

It’s great that our A/B test samples of 20,000 give us less than 1% error margin at 99% confidence … but we really feel an insight when we see a user’s face, watch how they react, and listen to them describe their experience.

(Even Google, the most data-saturated business on the planet, relies heavily on qualitative interviews.)

Cue the explosion in remote video tools for research: rapid, low cost solutions using smartphones, desktop webcams, GoPros and even Google Glass devices. These tools give us near-instant reactions from consumers that help us understand what’s really going on.

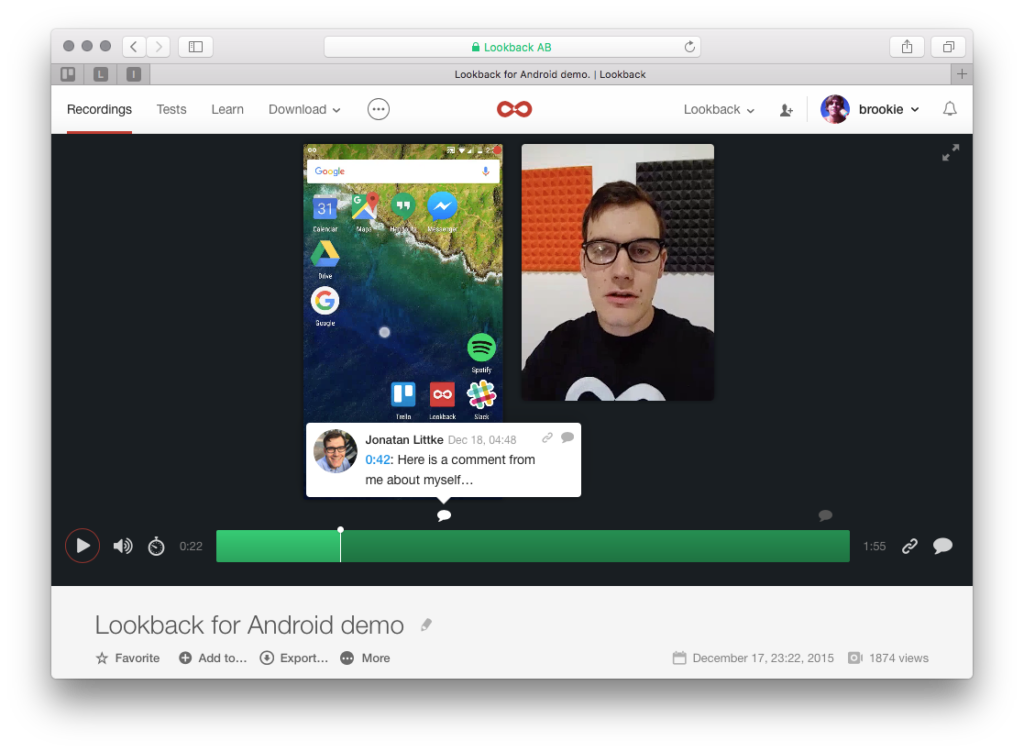

Lookback, for example, is a video-based tool for remote UX testing. It records the face, screen and voice of users anywhere in the world, and can be used live with a moderator or fully unmoderated.

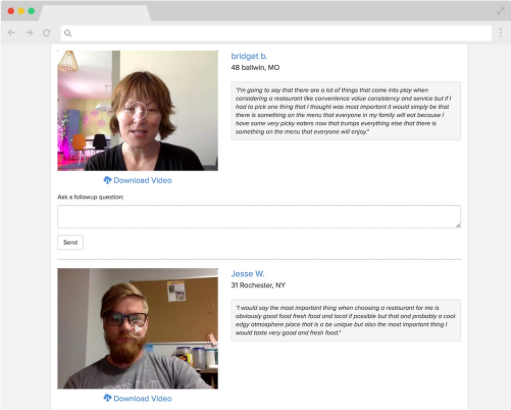

UserTesting is more of a whistles-and-bells solution for recruitment, management and reporting of remote user tests. A global panel of testers can give feedback using template studies in less than an hour if needed.

If you’re looking for more than product feedback, Discuss.io is a video research platform for one-on-one and group conversations. There is integrated participant recruitment, reporting tools to share findings and you can choose between software-only and fully managed services.

If you need more of a managed service for research projects or user generated content, Watch Me Think is a hybrid platform with a consulting team and video management software.

Applications include narrated videos of consumers in their kitchens, product tests and segmentation deep-dives. You can also subscribe to the ‘Consumer Closeness Toolbox’ – a monthly stream of topical trend videos with options for video omnibus questions to panel members.

And if you want something fully automated, fast and cheap – and you need consumers in the US – QualNow can get you near instant videos of them answering your questions.

Promising feedback from its consumer panel in minutes, $250 will get you 10 people from the QualNow panel answering 5 questions – including incentives, computer-generated transcripts and access to built-in analysis tools.

Collaborative brainstorms, forum discussions and focus groups with digital tools

Video is great, but it’s not the only route to getting to five whys depth. Sometimes, a brief demands more deliberation, more interaction between consumers or more projective approaches.

Digsite is a qualitative research platform with features for co-creation, brainstorming and testing new ideas through group discussions, whiteboards and stimulus markup tools.

It can be used on a software-only basis; or projects can be fully managed as ‘sprints’ with social recruitment of participants, moderation, analysis and reporting turned round in a few days.

Several other platforms have a similar software-and-services model: Incling, Further, CMNTY, 2020 Research and iTracks all have their own technology for qualitative research and small communities, along with professional services for project management, recruitment and research design.

A few recent startups give us a glimpse of how these approaches may develop over the next few years.

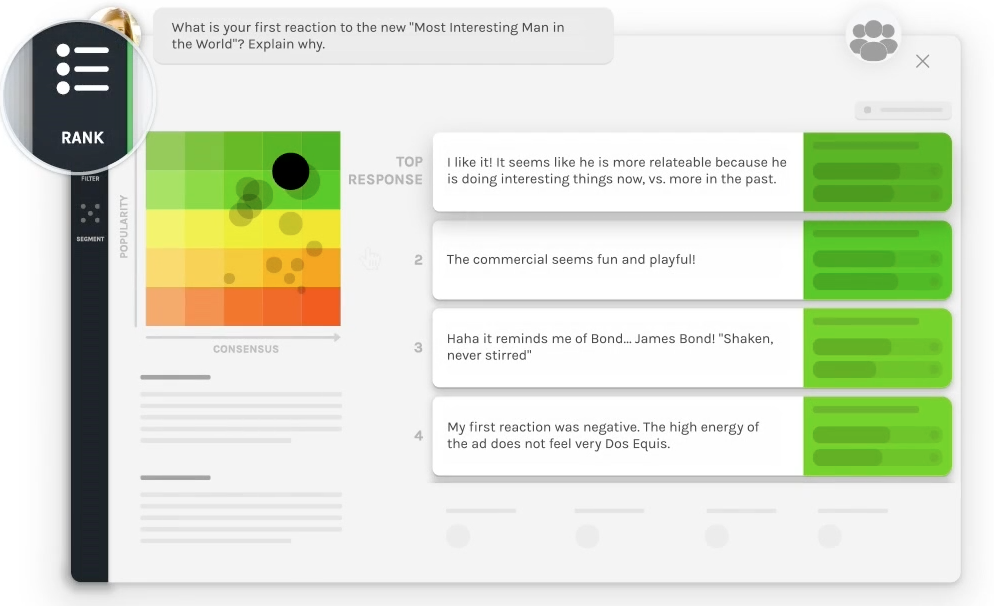

Using VCM (Very Clever Maths … more commonly and loosely known as ‘Artificial Intelligence’), recent startups Remesh and Quester have developed ‘large scale qualitative research platforms’.

In Remesh, group discussions with hundreds or thousands of participants take place with the help of automated tools to support a moderator, analysing responses in real time and helping to adapt questions on the fly.

Watch this space for more ‘AI-driven qual research’ platforms like these two.

Neuromarketing tools that tell us what people really feel

Thanks behavioural economics, emotion analytics and neuropsychology.

You’ve brought us such vacuously emoting ad campaigns as this one for oven chips and this one for a bank that was bailed out with my taxes.

Thanks a lot.

But I still live in hope that consumer neuroscience will bring us something more noble.

It’s taught us that we’re unreliable witnesses to our own behaviour; we can’t remember what we did yesterday – let alone rationalise why; and we’re driven by weird – sometimes dark – biases that we don’t want to own up to.

So if you want to get past these limitations in direct questioning, the options are increasing all the time.

Implicit Association Tests can be dropped into surveys using tools like iCode or Sentient Prime. These use a combination of reaction time and accuracy to build models of ‘true’ feeling towards brands or concepts – which often differ from considered, rationalised answers.

Webcams have helped to democratise facial expression analysis and remote eye-tracking research: platforms such as Emotion Research Lab, Crowd Emotion and Affectiva have been widely deployed to test static creative, video content and even store layouts.

Using physical sensors is now also much more affordable: EEGs (for brain activity scanning), galvanometers (to read stress level variations through skin conductance) and ECG monitors (to detect muscle activity and arousal) can be deployed in popup labs by companies like Bitbrain and analysed through platforms like iMotions.

Some more recent developments in this space show how much further we still have to go.

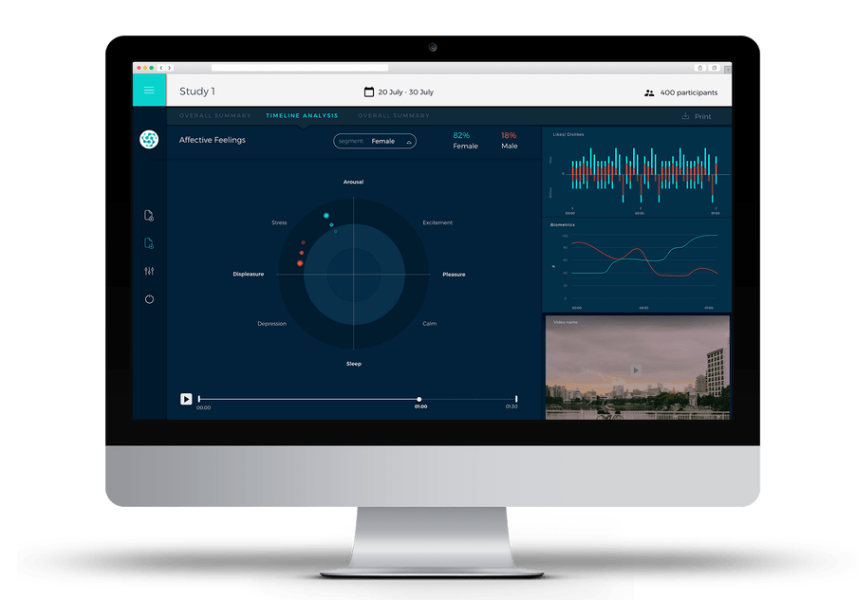

Mindprober is a fully automated neuroscience testing solution for video. Users configure a study online, a panel of testers equipped with biometric sensors watches the content, and results are available in real time in an online dashboard.

Beyond Verbal analyses recordings of customer calls to sales teams, support centres or claims departments. Emotional valence, arousal and temper levels are measured from vocal intonation; responses are classified into one of 11 major mood groups; and changes can be tracked over time.

Emaww is a recent startup that has developed a solution to measure a user’s emotional state based on their smartphone touchscreen behaviour (firmness, speed of hand gestures).

Let’s hope these new tools lead to something more than just faux-passionate 30-second spots for processed potato pieces.

Machine learning tools to make sense of unstructured text, image and video

Structured data is tidily arranged and meaningfully labeled so it can easily be read by machines.

Unstructured data – much of it qualitative – isn’t tidy or stored in a defined data model: this includes reviews, social posts, call centre recordings, images, video and other audio files. Rich sources of potential insight, which – until recently – could only be extracted with painstaking content analysis by humans.

But advances in machine learning have meant these unstructured data sources can be decoded, classified and analysed much more reliably. And much faster and more cheaply.

For text, Natural Language Processing techniques enable speech in audio or video files to be transcribed automatically; and for large volumes of text to be segmented by topic or by sentiment.

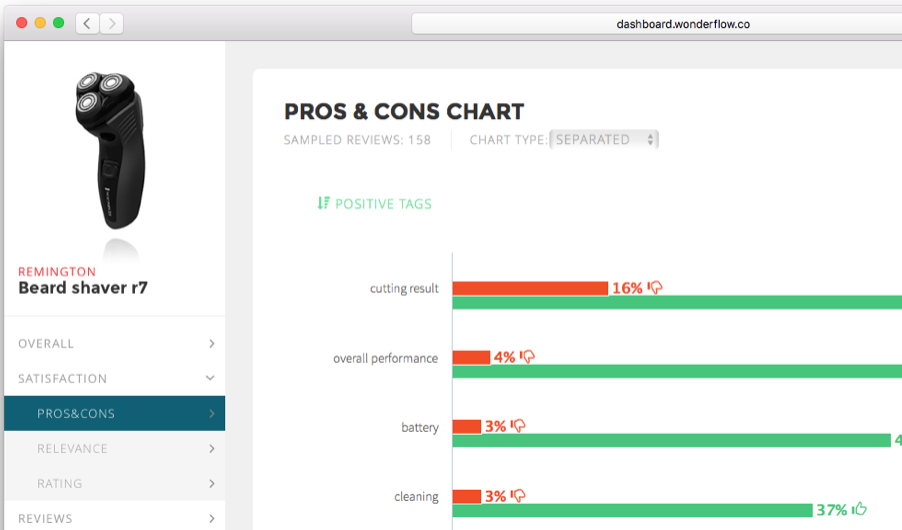

Tools like Wonderflow, Thematic and Chattermill use text input from surveys, reviews or any other source of customer feedback to distill meaning and track trends.

Object recognition within images has also advanced quickly over the last few years. This is the same technology that allows you to search Google Photos for ‘Eiffel Tower’, ‘wine glass’ or ‘cat’.

Picasso Labs, Glimpzit and Beautifeye offer visual analytics tools to help brands decode images from Instagram, Facebook and Twitter.

This same approach is being applied to video – just a lot of still images, after all – by platforms like Living Lens and Nail Biter.

Combining both of these approaches, Signoi provides a quantitative semiotics platform that analyses large volumes of text and image data to decode cultural signals.

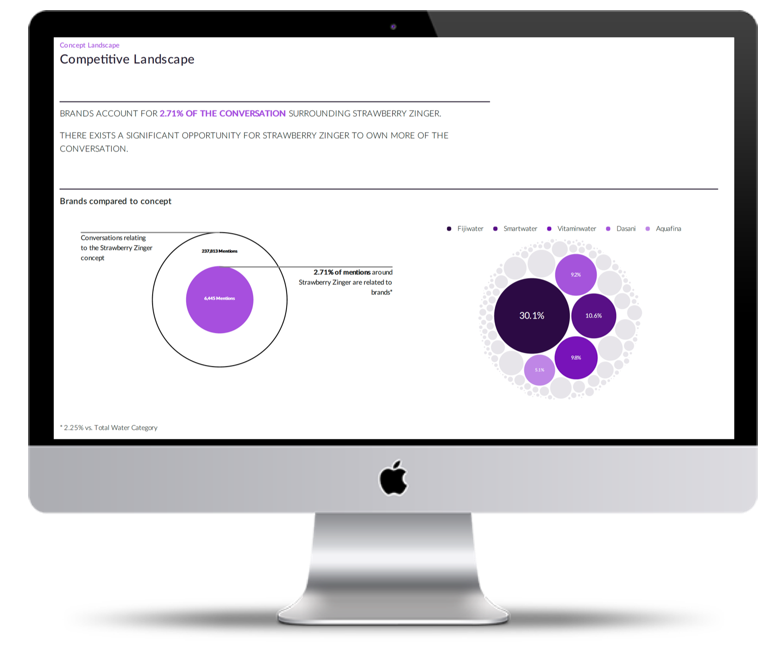

Finally, Black Swan Data uses both visual and text analytics algorithms in two distinct applications.

Its Dragonfly product emulates how both the eye and brain work to predict the most salient areas of any visual content: marketing materials, store designs or digital products.

And its Sonar product tests concepts without asking a single consumer for feedback: using NLP to deconstruct the description of an idea, it searches through millions of social posts to find matching language and estimate likely interest levels for the concept.

As with AI-driven qual, expect to see many more solutions like these come to market in the next few years.

So these are just a handful of platforms that can help with the ‘five whys’ pillar of lean insight.

Qualitative depth features heavily in many of these tools; but that doesn’t have to mean small scale. New technologies are challenging the distinction between qual and quant.